Advent of Code, the yearly gauntlet of programming puzzles that Eric Wastl runs each year from December 1 through December 25, is a big event for me. It’s the one time of the year when I can get out of the humdrum of programming for an insurance company and really get to think about problems. Problems like, how many puzzles can I solve in a new programming language before I say “Fuck it, I’ll do it in Python.”

(Usually three or four).

One year, I started out doing the puzzles in the “fantasy retro console” Pico-8, as games. One year I made one of the later puzzles into an isometric roguelike that I then turned in for the following spring’s 7 Day Roguelike competition.

My past couple of years haven’t been wild successes. The puzzles got too hard for me to wrap my mind around, and rather than seek help on Reddit, I just quit. I always meant to go back and finish them but, well, I didn’t.

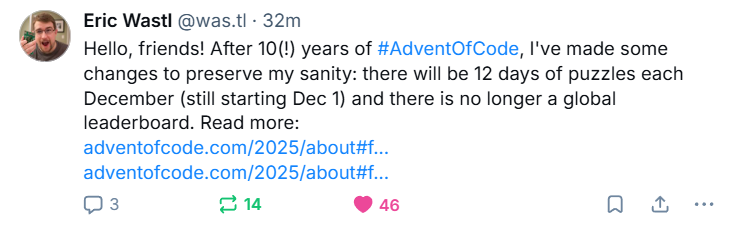

I’m not super surprised to find that Eric has decided to cut down on the number of puzzles in AoC. He’s been clear for years that preparing for AoC takes a good portion of his year, what with developing the puzzles and having testers solve them so that he can smash the bugs and set the difficulty appropriately.

I was a little more surprised that he’s deleting the global leaderboard. Some people, it seems, are a little too excited by it, even going so far as to DDoS it to keep people from updating. Past couple of years, but especially last year, the top spots of the leaderboard were set by people who had completed both halves of the usually pretty tough puzzles in less than a minute, in many cases much less. The global leaderboards had been made pointless by both AI and very fast solvers, or very fast solvers using AI to simplify the puzzle for them.

And, Eric is very clear about the use of AI:

Should I use AI to solve Advent of Code puzzles? No. If you send a friend to the gym on your behalf, would you expect to get stronger? Advent of Code puzzles are designed to be interesting for humans to solve - no consideration is made for whether AI can or cannot solve a puzzle. If you want practice prompting an AI, there are almost certainly better exercises elsewhere designed with that in mind.

I’ll be up front: I have been programming with Github Copilot for some time, and use it as part of my workflow both at work and at home. I’ve never dumped the puzzle into Copilot and asked it to pop out a solution; it’s more useful for setting up infrastructure, like timers and file parsing and stuff. I have asked ChatGPT to solve problems after I’ve solved them, just to see if it can. Generally, it doesn’t do so hot. But clearly the top solvers have better AI than I do, and that’s fine. I agree with Eric, here: I do AoC to challenge myself, not to prove I can AI better than someone else.

You can read all about all the changes for 2025 here, on the FAQ for AoC 2025.